The NVIDIA Vera Rubin NVL72 rack currently quotes between $5 million and $7 million per unit, with roughly $1 million of that tied to networking gear according to supply chain estimates reported by Tom’s Hardware. The platform bundles 72 Rubin GPUs, 36 custom 88-core Vera CPUs, NVLink 6 switches, ConnectX-9 SuperNICs, and BlueField-4 DPUs into a liquid-cooled Oberon rack. Full production shipments are scheduled for the second half of the year, with cloud availability through AWS, Google Cloud, Microsoft Azure, Oracle, CoreWeave, and Lambda. Performance targets land at 3.6 exaFLOPS dense FP4 and 1.2 exaFLOPS FP8, roughly 3.3 times the inference throughput of GB300 NVL72.

Vera Rubin Pricing at a Glance

Rack-scale AI systems are sold as full integrated solutions, not single GPUs, which is why quoted numbers land in the millions rather than the thousands.

- Vera Rubin NVL72 (VR200): $5M to $7M per rack

- Networking included in rack quote: approximately $1M

- Vera Rubin Ultra NVL144 (VR300, late 2028): $7M to $8.8M forecasted

- Blackwell GB200 NVL72 (reference): $2.8M to $3.4M

- Blackwell GB300 NVL72 (reference): $6M to $6.5M

- Cooling system per NVL144 rack: approximately $55,710 (Morgan Stanley)

Prices come from supply chain sources cited by Tom’s Hardware and DigiTimes. NVIDIA has never published an official list price for any NVL72 or NVL144 product.

Enterprise deals are negotiated directly, and hyperscalers secure favorable volume terms that are not reflected in these quotes.

Gamers looking at consumer NVIDIA hardware should instead check the RTX 5070 Ti original price or explore compact workstations covered in our roundup of NVIDIA DGX Spark alternatives, which sit in an entirely different product tier than rack-scale AI infrastructure.

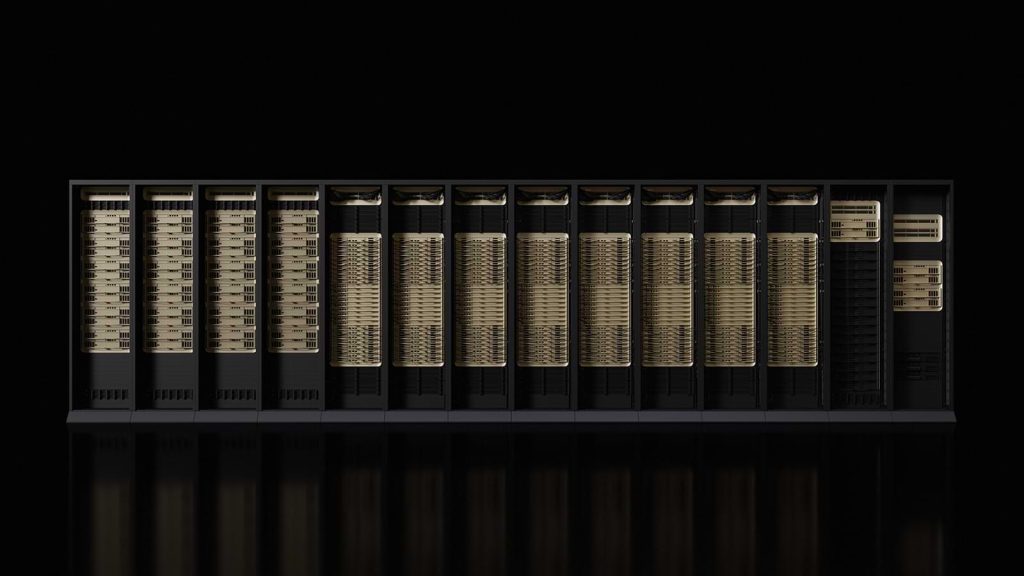

Vera Rubin NVL72 Specs and Architecture

The platform combines three core chip types: the Rubin GPU, the Vera CPU, and the networking silicon that ties them together into a single rack-scale unit. Each Rubin GPU is a dual-die package using TSMC’s 3nm node with HBM4 memory, while Vera is NVIDIA’s second-generation Arm server CPU succeeding Grace.

Key hardware specs:

- Rubin GPU: 50 PFLOPS dense FP4 per package, 288 GB HBM4, 13 TB/s memory bandwidth

- Vera CPU: 88 custom Arm cores, 176 threads via spatial multithreading

- NVLink-C2C interconnect: 1.8 TB/s coherent CPU-to-GPU link

- NVLink 6 switch: 3.6 TB/s all-to-all scale-up bandwidth per GPU

- ConnectX-9 SuperNIC: 1.6 Tb/s per-GPU scale-out bandwidth

- Process node: TSMC 3nm

- Rack: third-generation MGX Oberon, liquid cooled

Vera CPUs share a unified address space with Rubin GPUs, which removes a chunk of overhead that used to bottleneck agentic and multi-step inference workloads. Memory bandwidth and interconnect performance matter more than raw FLOPs once a model gets large enough, and the platform is engineered around that reality.

Full Comparison Table

| Feature | GB300 NVL72 | Vera Rubin NVL72 | Vera Rubin NVL144 CPX | Rubin Ultra NVL144 |

| Measurement | Per rack | Per rack | Per rack | Per rack |

| GPU packages | 72 | 72 | 72 + 144 CPX | 144 |

| CPUs | 36 Grace | 36 Vera (88 cores) | 36 Vera | 36+ Vera |

| Dense FP4 compute | 1.5 EF | 3.6 EF | 8 EF | 15 EF target |

| Memory per GPU | 288 GB HBM3e | 288 GB HBM4 | 288 GB HBM4 + 128 GB GDDR7 | 1 TB HBM4e |

| NVLink bandwidth | 1.8 TB/s | 3.6 TB/s | 3.6 TB/s | 3.6 TB/s |

| Rack power (approx.) | ~130 kW | ~120-130 kW | ~370 kW | ~600 kW |

| Rack price (approx.) | $6M-$6.5M | $5M-$7M | TBD | $7M-$8.8M |

| Availability | Shipping | H2 release year | End of release year | Late 2028 |

Release Date and Cloud Availability

NVIDIA kicked off the Rubin platform at CES in Las Vegas, with Jensen Huang confirming full production and samples already in customer hands. Rack-scale shipments to partners land in the second half of the year, and the specialized Rubin CPX variant arrives at year-end.

First cloud deployments are rolling out through AWS, Google Cloud, Microsoft Azure, and Oracle Cloud Infrastructure, plus CoreWeave, Lambda, Nebius, and Nscale. Microsoft confirmed that future Fairwater AI superfactory sites will deploy Vera Rubin NVL72 racks at scale.

Enterprise buyers should expect six to twelve month lead times for on-premise deployments, given the power and cooling upgrades required to handle 120+ kW racks.

Vera Rubin vs Blackwell Performance

NVIDIA claims the Rubin generation trains equivalent models with a quarter of the GPUs, and runs inference at roughly one-tenth the cost per million tokens of Blackwell. These numbers come from NVIDIA benchmarks and represent ideal-case scenarios.

Throughput gains at the rack level:

- 3.3x FP4 compute over GB300 NVL72

- Up to 5x better inference performance per watt

- 10x lower cost per million tokens (NVIDIA figure)

- 7.5x performance with NVL144 CPX variant over GB300 NVL72

- $5 billion in token revenue per $100 million invested (NVIDIA ROI claim)

Real-world gains depend heavily on the model, batch size, and whether workloads take advantage of CPX prefill acceleration. Mixture-of-experts and long-context reasoning systems benefit most, while smaller standard inference workloads see smaller improvements.

Power Consumption and Cooling

Performance this generation comes with a real energy cost. Each Rubin GPU ships with two power profiles: Max-Q at 1,800W for efficiency and Max-P at 2,300W for peak throughput.

- Rubin GPU TDP (Max-Q): 1,800W

- Rubin GPU TDP (Max-P): 2,300W

- NVL72 rack power: approximately 120 to 130 kW

- NVL144 CPX rack power: approximately 370 kW

- Rubin Ultra NVL576 (future Kyber rack): 600 kW per rack

- Cooling system cost per NVL144 rack: approximately $55,710

Max-P trades roughly 20 percent more power draw for gains that fall short of that figure, which is why NVIDIA markets Max-Q as the sweet spot for large fleets.

HBM4 memory supply remains tight, which feeds back into pricing pressure. Memory spot markets like CXMT DDR5 RAM prices show how upstream shortages ripple across every part of the AI stack.

NVDA Stock Price Context

NVIDIA trades under the ticker NVDA on Nasdaq. Shares sit near $201 in recent trading, up roughly 90 percent over the prior twelve months and more than 10x over five years. The 52-week range spans $95.04 to $212.19.

Market cap hovers near $4.82 trillion, placing NVIDIA as the world’s most valuable publicly traded company by a wide margin. Analyst consensus price targets average around $268, with highs near $380 and lows near $140.

Vera Rubin revenue is expected to show up in results from Q3 onward, assuming production stays on track.

Conversational Queries Users Actually Type

How people search for this hardware says a lot about what they actually need to know:

“How much does one Vera Rubin rack cost?”

Answer: $5M to $7M per rack

“Is Vera Rubin available yet?”

Answer: In full production, shipments planned for the second half of the year

“What is the difference between NVL72 and NVL144 CPX?”

Answer: CPX adds 144 prefill GPUs for long-context inference

“How much power does a Rubin GPU use?”

Answer: 1,800W (Max-Q) to 2,300W (Max-P)

“Is Rubin better than Blackwell?”

Answer: Yes, 3.3x compute and roughly 10x lower token costs per NVIDIA

“Can I buy a Vera Rubin GPU for gaming?”

Answer: No, this is a datacenter-only platform with no consumer SKU

Users searching for consumer hardware should look elsewhere, since Rubin has no retail SKU. Competing silicon from Axera and other edge AI chip vendors targets a different tier of the market entirely.

Common Mistakes Buyers and Readers Make

- Confusing the NVL72 name with GPU count: an NVL72 rack has 72 Rubin packages but 144 reticle-sized GPU dies

- Assuming “Vera Rubin” is a consumer GPU: this is a datacenter AI platform, not an RTX replacement

- Comparing per-GPU prices: rack-scale systems include networking, cooling trays, and software that a standalone GPU does not

- Ignoring power costs: a 130 kW rack running continuously costs roughly $125,000 per year in electricity at U.S. datacenter rates

- Mixing up Rubin, Rubin Ultra, and Rubin CPX: three distinct product lines with different release windows and use cases

- Treating NVIDIA’s 10x inference claim as universal: gains depend on workload type, especially long-context versus short-batch

- Expecting immediate cloud pricing from AWS or Google: spot rates follow deployment capacity, not the announcement cycle